PhD candidates Lana Olague of the University of Toronto Institute for Aerospace Studies (UTIAS) and Michelle Ye-Chen Xu (ECE) from The Edward S. Rogers Sr. Department of Electrical and Computer Engineering received international recognition from two organizations for their contributions to the field of engineering.

Zonta International awarded Lana Olague with the prestigious Amelia Earhart Fellowship. Lana conducts research in the area of aerodynamic shape optimization under the supervision of UTIAS Director and Professor David Zingg. Amelia Earhart Fellowships have been awarded to hundreds of women from 59 countries and total over $7 million. Previous Fellows have gone on to defy stereotypes, working on innovative research as scientists and engineers. Zonta International, a global organization of executives and professionals, advances the status of women worldwide through service and advocacy.

Michelle Ye-Chen Xu received an Educational Scholarship in Optical Science and Engineering from the Society of Photographic Instrumentation Engineers (SPIE). Under the supervision of Vice-Dean of Research and Professor Stewart Aitchison and Professor Gilbert Walker, Michelle is engineering cancer-diagnostic hand-held devices, using nano-grating surface plasmon (SP) sensors and nano-pillar photonics crystal (PC) sensors to improve early detection of cancer. In 2010, SPIE will award $323,000 in scholarships to 137 outstanding students around the world based on their potential for long-range contribution to optics and photonics, or a related discipline.

Olivier Trescases from The Edward S. Rogers Sr. Department of Electrical and Computer Engineering, with Chris Lea, director of facilities at Hart House and David Berliner, Hart House’s sustainability coordinator, took home one of three Green Innovation Awards at the Green Toronto Awards ceremony on April 23.

Their idea, Green Gym: Harvesting Energy from Exercise Equipment, looked at the energy people wasted while using exercise equipment and how that energy could be harvested as a green power source.

The award comes with $10,000 in grant money to fund development of an energy-harvesting exercise bicycle, funded by the City of Toronto and Toronto Community Foundation.

Please follow the link(s) below to read more about this innovative project:

A team comprised of four U of T Engineering students took first prize in the first-ever Google Case Challenge at the Canadian Undergraduate Technology Conference 2010 held in April. Huda Idrees (MIE, 1T2), Hubert Ka (ECE, 1T2), Kazem Kutob (TrackOne, 1T3) and Layan Kutob (MIE, 1T2), beat out 23 other Canadian engineering teams with their “Google Voice to Voice” proposal, a real-time language translator application for the Android smart-phone that allows users to speak to one another in different languages.

The Google Case Challenge asked teams to create an innovative product proposal with monetization potential for one of Google’s many software platforms. The selection of the Android operating system was not disclosed until April 29, the first day of the competition. At that time, students were given two hours to brainstorm a proposal and create a product pitch. Judges shortlisted the teams based on the quality of their three-minute product pitch. During the last day of competition, judges scored each team’s final 10-minute presentation on the merits of product definition, engineering, strategy and marketing.

The U of T team credits the Faculty of Applied Science & Engineering for “setting such high standards that allow students to excel in their field of interest.” The team also thanked their professors for encouraging them to “explore many different fields of knowledge, allowing [them] to dream big and work hard to achieve [their] dreams, vision and goals.”

What’s next for this winning team of future engineers? Layan Kutob sums it up as “a dream come true.” Not only did they win technological gear from Google, the real prize is the opportunity to interview for positions, at Google, within their chosen area of expertise.

Brendan Frey from The Edward S. Rogers Sr. Department of Electrical and Computer Engineering and Benjamin Blencowe from the Donnelly Centre for Cellular and Biomolecular Research at the University of Toronto unveiled a groundbreaking “Enigma machine” program that can decode genetic messages.

In a paper published on May 6 in the journal Nature entitled, “Deciphering the Splicing Code,” Frey and Blencowe describe how a hidden code within DNA explains one of the central mysteries of genetic research – namely how a limited number of human genes can produce a vastly greater number of genetic messages. The discovery bridges a decade-old gap between our understanding of the genome and the activity of complex processes within cells and could one day help predict or prevent diseases such as cancers and neurodegenerative disorders.

Please follow the links below to read full articles on this groundbreaking research:

- U of T News: U of T researchers crack ‘splicing code’

- Nature: The code within the code

- CBC News: Canadian scientists crack hidden DNA code

- Physorg.com: Researchers crack ‘splicing code,’ solve a mystery underlying biological complexity

- CBC News: Canadian scientists crack hidden DNA code

- Science Daily: Researchers Crack ‘Splicing Code,’ Solve a Mystery Underlying Biological Complexity

Professor Chris Kennedy (CivE) published study, “Greenhouse Gas Emissions from Global Cities” examined ten global cities’ greenhouse gas emissions. Professor Kennedy believes cities can learn best practices and strategies for reducing air pollutants by comparing and understanding why different cities generate the emissions they do.

Among the findings, the Greater Toronto Area is found to produce high emissions due to its climate and transportation consumption. Click here to read the full article from NEWS @ U of T’s website.

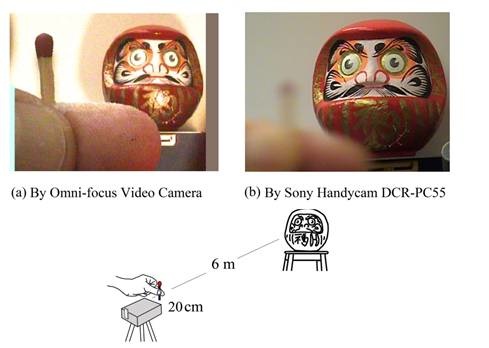

University of Toronto, a world-leading research university, announces a breakthrough development in video camera design. The Omni-focus Video Camera, based on an entirely new distance-mapping principle, delivers automatic real-time focus of both near and far field images, simultaneously, in high resolution. This unprecedented capability can be broadly applied in industry, including manufacturing, medicine, defense, security — and for the consumer market.

Inventor and principal investigator of the Omni-focus video camera, Professor Keigo Iizuka of The Edward S. Rogers Sr. Department of Electrical and Computer Engineering, explains that, “the intensity of a point source decays with the inverse square of the distance of propagation. This variation with distance has proven to be large enough to provide depth mapping with high resolution. What’s more, by using two point sources at different locations, the distance of the object can be determined without the influence of its surface texture.” This principle led Professor Iizuka to invent a novel distance-mapping camera, the Divergence-ratio Axi-vision Camera, abbreviated “Divcam,” which is a key component of the new Omni-focus Video Camera.

The Omni-focus Video Camera is produced in collaboration with consulting investigator Dr. David Wilkes, president of Wilkes Associates, a Canadian high-tech product development company. It contains an array of color video cameras, each focused at a different distance, and an integrated Divcam. The Divcam maps distance information for every pixel in the scene in real time. A software-based pixel correspondence utility, using prior intellectual property invented by Dr. Wilkes, then uses the distance information to select individual pixels from the ensemble of outputs of the color video cameras, and generates the final “omni-focused” single-video image.

“The Omni-focus Video Camera’s unique ability to achieve simultaneous focus of all of the objects in a scene, near or far, multiple or single, without the usual physical movement of the camera’s optics, represents a true advancement that is further distinguished in terms of high-resolution, distance mapping, real-time operation, simplicity, compactness, lightweight portability and a projected low manufacturing cost,” says Dr. Wilkes.

The resulting image shown in Figure 1a (taken with a prototype using two-color video cameras) clearly demonstrates how the omni-focused output dramatically differs from that of a conventional camera, shown in Figure 1b. Note that in the omni-focused image, the fingers in the foreground are so sharply focused that even the fingerprints are easily recognized.

Figure 2 illustrates the Omni-focus Video Camera’s high pixel resolution. Although the two sewing needles were photographed approximately 1.2 meters apart, both are in sharp focus. Note the eye of the back needle, is actually viewed through the eye of the front needle.

The camera is still in the research phase. But it’s not difficult to imagine how far-reaching an impact the Omni-focus Video Camera could have on several industries. As for the future direction of his research, Professor Iizuka sees the following possibilities:

(1) Application of the Omni-focus Video Camera to TV studio cameras. Consider the example of a musical concert being televised by a major network. Even though the singer is in sharp focus, band members in the background, are invariably out of focus. Conventional video cameras are unable to focus simultaneously on both the singer and band members in the background. The Omni-focus Video Camera removes this limitation to deliver higher-quality video images and improved quality of experience to potentially millions of TV viewers, worldwide.

(2) Application of the Omni-focus Video Camera to medicine. Says Professor Iizuka, “I’d like to apply the principle of the Omni-focus Video Camera to the design of a laparoscope. It would help doctors at the operating table, if they can see the entire view without touching optics of the laparoscope, especially if dealing with a large lesion.”

Please follow the links below to read more on the applicability of this research in various industry sectors:

- Science Daily: Omni-Focus Video Camera to Revolutionize Industry: Automatic Real-Time Focus of Both Near and Far Field Images

- Popular Science: Omni-Focus Video Camera Can Focus Near and Far at the Same Time

- Security Systems News: Everything in focus, all the time

- Medical News Today Omni-Focus Video Camera To Revolutionize Industry