Professor Xinyu Liu (MIE) and his team design robots, but their latest creation stretches the very definition of the term: though it’s controlled by a computer, the robot body has no mechanical parts.

Instead, the physical body of the robot is a tiny, soil-dwelling worm known as C. elegans.

“Essentially, what we’ve done is to replace the organism’s neural control patterns with our own control, using light-based stimulation,” says Liu. “This enables us to achieve complete closed-loop control of a living organism, which is a new strategy in robotics.”

C. elegans worms are often used in biology studies as a model organism, due to their brief lifespans, anatomical simplicity and genetic similarity to more complex organisms. Ten years ago, Liu was inspired by a video created by German-American research team that had modified a C. elegans worm using a technique known as optogenetics.

In this method, nerve and muscle cells within organisms are genetically modified to express a type of light-sensitive protein called rhodopsin. Because C. elegans is transparent, shining light on the affected muscle cells causes them to contract and bend the worm’s body.

“In the video, they shone a laser light on the side of the worm, and that part would bend due to the muscle stimulation,” says Liu. “Our hypothesis was that if you could coordinate the pattern of that light simulation, you could replicate the worm’s natural method of locomotion.”

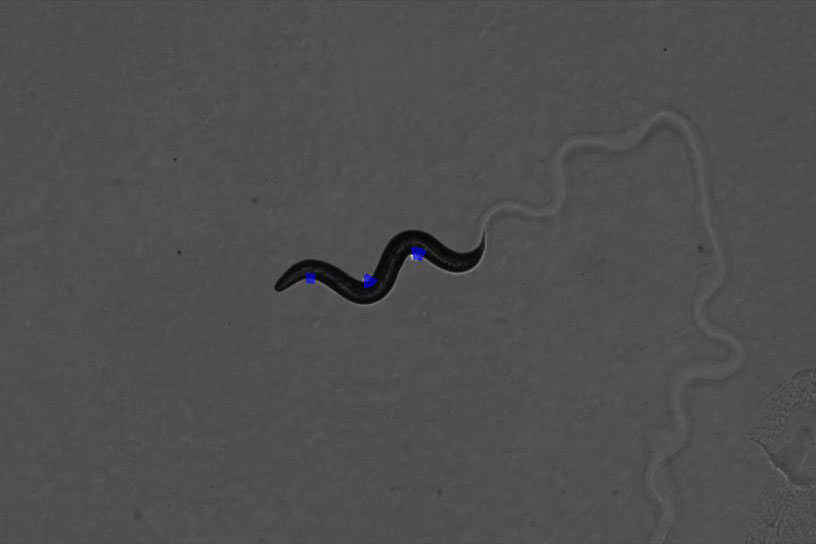

C. elegans worms move like snakes, twisting their bodies left and right in an S-shaped pattern, similar to a sine wave. Though this pattern is relatively simple from a mathematical point of view, replicating it in a controlled way turned out to be very difficult.

A key breakthrough was achieved when Liu’s team, working in collaboration with a group led by U of T Professor Mei Zhen (Molecular Genetics & Physiology), created a genetically modified worm that was in some ways the reverse of one in the original video Liu saw. Rather than bending its muscles when exposed to light, this one gave off light whenever its muscles were activated.

“Watching the pattern of muscle activation, we could see that it was slightly out of phase with the movement of the animal,” says Liu. “In other words, the muscles fire slightly before the actual motion. If we wanted more accurate control of where the worm was going, we needed to account for this phase difference in our light stimulation pattern.”

In a paper recently published in Science Robotics, Liu and his team describe how they created RoboWorm. An optogenetically modified C. elegans worm is subjected to a pattern of light simulation — controlled by sophisticated algorithms developed by Liu and his team — which activates the worm’s muscles in the right sequence to replicate the snake-like locomotion pattern.

The algorithms achieve what is known in robotics as closed-loop control. This means that the team used microscope images to measure precisely the location and movements of the worm at a given moment and incorporated that feedback into their decision-making process to accurately steer it to the next target point.

Using this system, Liu and his team can drive the worm forward, make it turn left and right, execute a U-turn and even navigate a simple maze.

Now that RoboWorm functions, Liu and his team envision two possible directions for it.

“Firstly, this would be helpful to biologists who might want to make the worm go somewhere it would not normally want to go. These worms naturally seek out heat and avoid bad-smelling substances; with this system we could see what happens if they do the opposite,” he says.

“But I could also see applications for a synthetic micro-robot that could be activated by a similar process.”

While many groups around the world have developed micro-robots that can crawl, swim or even grip tiny objects, most are currently controlled using a magnetic or acoustic field. Using light might open up new possibilities, perhaps even including medical robots that could be deployed into the human body.

“This is a proof-of-concept demonstration. RoboWorm is not truly a robot yet, but it could be one day.”